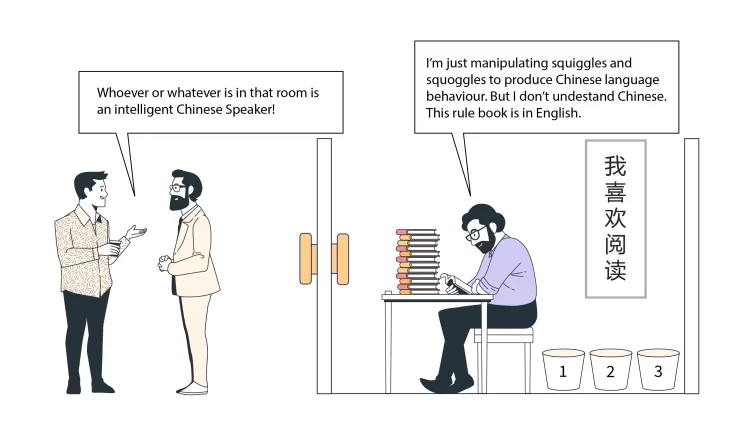

John Searle’s famous Chinese Room Argument has been the target of great interest and debate in the philosophy of mind, artificial intelligence and cognitive science since its introduction in Searle’s 1980 article ‘Minds, Brains and Programs’. It is no overstatement to assert that the article has been the centre of attention for philosophers and computer scientists for quite some time. The Chinese Room is supposed to scuttle the thought of strong AI: which implies that computers have mental states. The Chinese Room arises out of the following, now familiar, story: Searle asks us to imagine that a man is seated in a sealed room with 2 doors: one allowing input from one source outside the room (in the form of a slot) and one allowing output to the source outside the room (also in the form of a slot). The input from the outside source are Chinese squiggles that have Continue reading

Modern Information Systems

Case Study: Bossard Company’s Digital Transformation

Bossard is a hardware international conglomerate that specializes in the development of fastening technology and devices. It also uses a wide variety of smart and sensor technology to achieve a competitive advantage. It plans to utilize “smart factory” logistics and verge into various new sectors or initiatives where their innovative approach may be useful. Bossard Company’s Business Model Originally, Bossard was founded as a family-owned hardware store in Switzerland. It sold various small hardware and fastening products which consist of bolts, screws, rivets, and nuts. It then expanded to a regional, national, and international level in the $72 billion global fastening industry. It has continued to expand and experience profit with a 15% increase in sales year over year. It has over 70 service locations, 35 logistics centers, and 10 applications engineering laboratories. Bossard went from serving local individual consumers and regional businesses to cooperation with international industrial companies. It Continue reading

Cryptography – Keeping Information Private

Cryptography signifies that which is concealed or hidden. It is writing or a description in a brief manner that secretly conveys a particular intelligence or words that we may wish to communicate. Cryptography may be used as a form of clandestine communication. The art of cryptography is a legitimate form of communication that is acknowledged in the world. This is because there can be some times of danger and stress between individuals or nations that will make the use of cryptography inevitable especially when there is need to carry out a successful operation, by ensuring that the enemy does not get to understand the deliberations or communications between various agents of government. Cryptography is the study of mathematical techniques for different dimensions of security. The other words that are closely related to cryptography are cryptanalysis and cryptology. Cryptanalysis is the science that is applied in defeating the mathematical techniques while cryptology Continue reading

Analysis of Chinese Room Thought Experiment in Artificial Intelligence

The mind has been the center of philosophical debates for the longest of times. John Searle has attempted to explain understanding and the mind when in 1980 he created his famous Chinese Room thought experiment. However, he is not discussing the human mind like many philosophers do. Instead, he is looking into the minds of machines. Searle is looking into Artificial Intelligence and debating whether or not it can actually be comparable to human understanding. While the Chinese Room thought experiment was originally posed to counter the claims of Artificial Intelligence researchers, philosophy has also used it to look into the minds of others. It is a challenge to functionalism (mental states constituted solely by the role they play) and the computational theory of mind (the human mind is information processing system and that thinking is a form of computing) and is related to many others famous thought experiments. In Continue reading

Problems of Artificial Intelligence (AI)

The rapid development of technologies preconditioned the emergence of drastic changes in peoples lives. The introduction of new approaches to work and rest triggered the reconsideration of traditional values and promoted the growth of a certain style of life characterized by the mass use of innovations and their integration with different aspects of society. However, technologies continue to evolve and nowadays we can observe the development of artificial intelligence (AI) that is considered a technology that can think and make decisions similar to human beings. Its creation is a significant step in the future as the efficiency of multiple tasks can be increased due to the utilization of artificial intelligence. Today, AI peculiar to machines as opposed to the natural intelligence (NI) possessed by all living beings. The adherers of this technology outline numerous benefits that can be associated with the further development and implementation of the given tool. Regarding Continue reading

Modern Block Cipher Algorithms in Cryptography

Cryptography signifies that which is concealed or hidden. It is writing or a description in a brief manner that secretly conveys a particular intelligence or words that we may wish to communicate. Cryptography may be used as a form of clandestine communication. The art of cryptography is a legitimate form of communication that is acknowledged in the world. Encryption is a process that uses an encryption algorithm to convert a message from plaintext into ciphertext, making the message unreadable to a third party. Block ciphers operate by breaking a message into fixed block sized messages which are encrypted using the same key. The advantage with block ciphers is that a smaller block can be created from a large message. DES (Data Encryption Standard) Data Encryption Standard is basically a symmetric-key algorithm used in the encryption of data of electronic nature. The algorithm was developed in the 1970s by IBM as Continue reading